The New Backbone of Intelligence

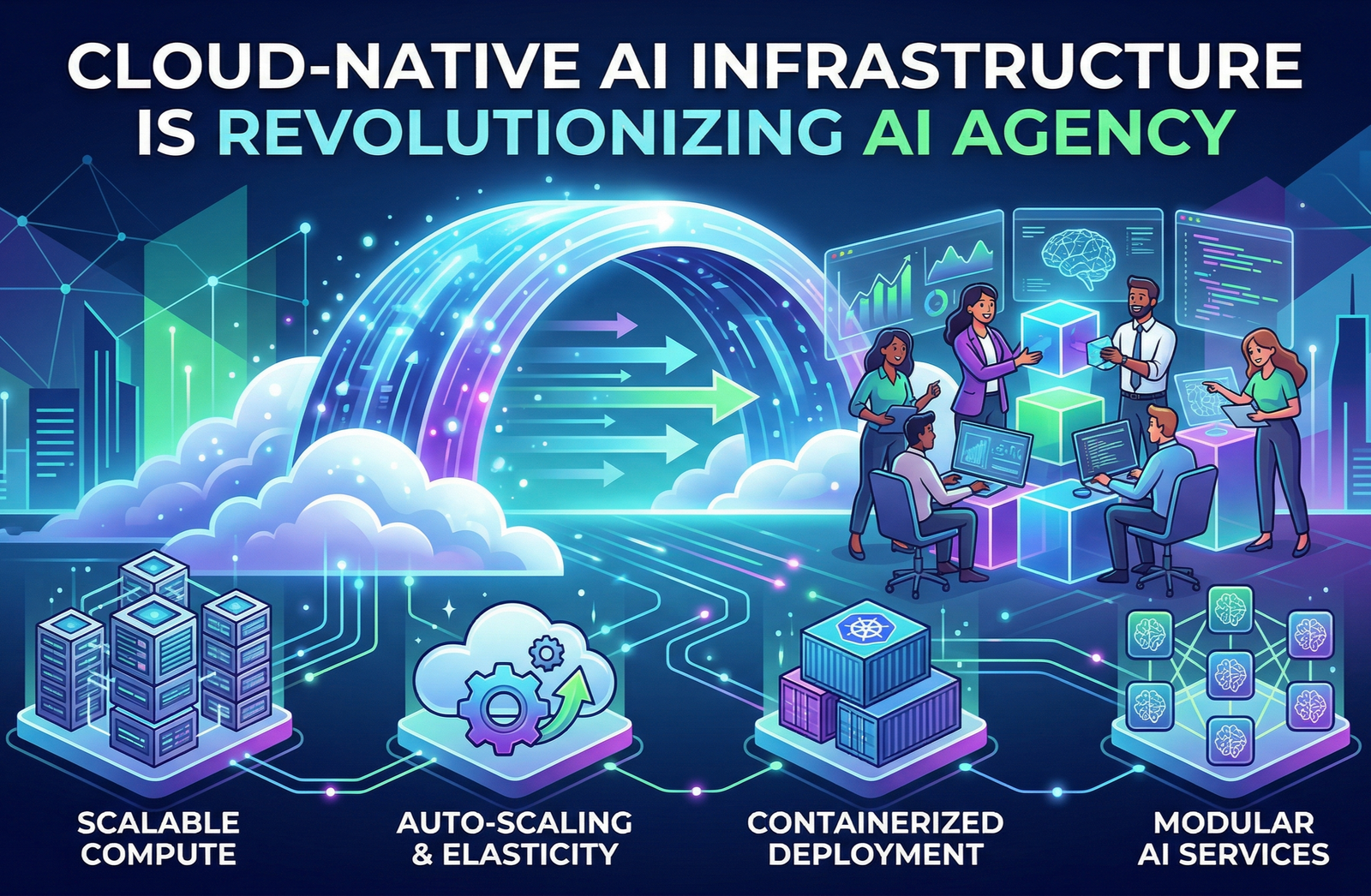

Training and deploying LLMs requires more than just code; it requires massive, distributed infrastructure. We explore how cloud-native clusters are evolving to meet this demand.

In the world of AI Agency, the implementation of high-performance computing and scalable AI clusters is a requirement for survival.

Optimizing Token Throughput

Latency is the enemy of a good user experience. Discover the latest strategies for optimizing inference pipelines to handle thousands of concurrent requests without lag.