Exploring the Impact of Dynamic Fusion-Aware Graph Convolutional Neural Network for Multimodal Emotion Recognition in Conversations

Key Intelligence

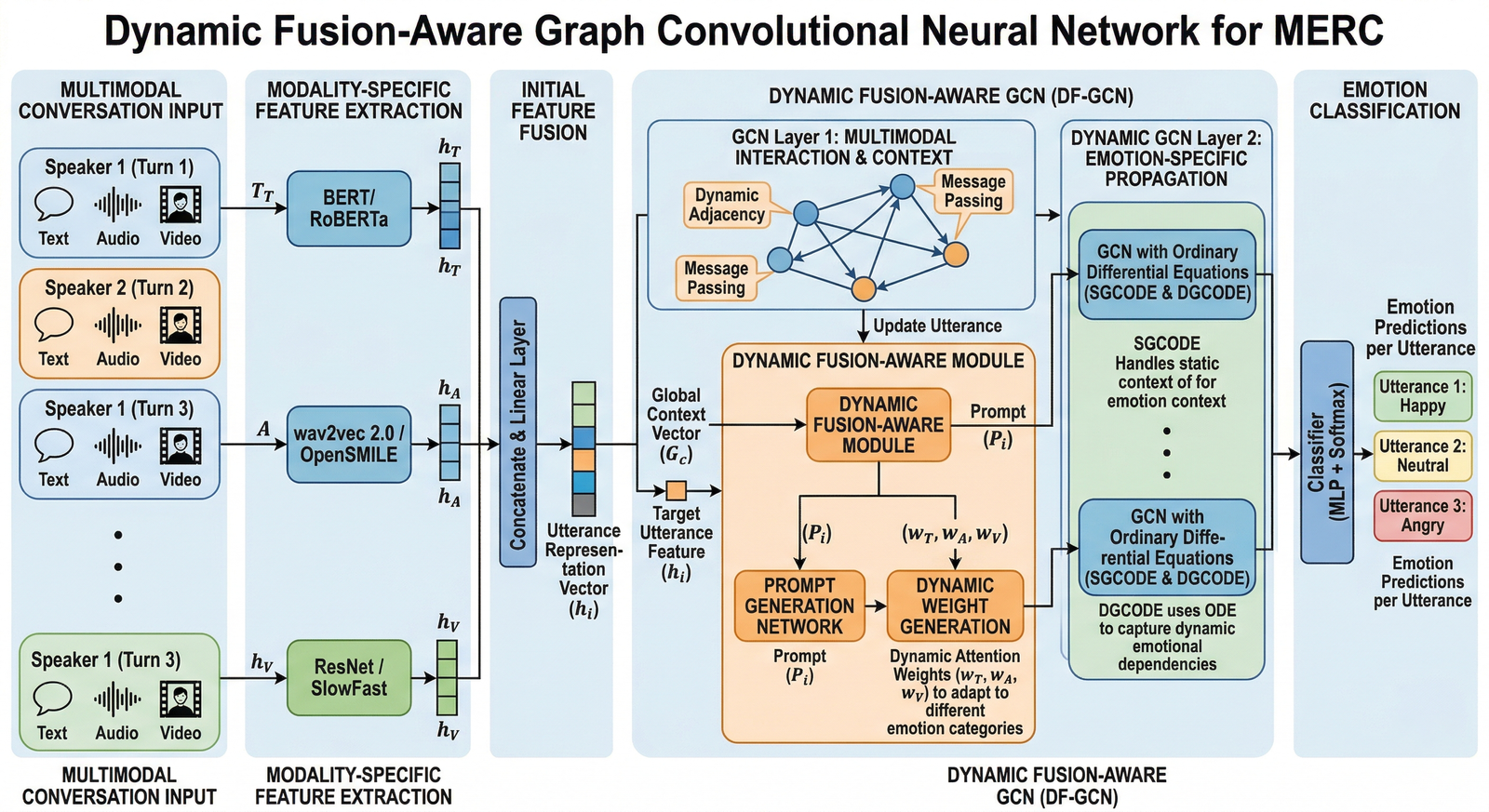

arXiv:2603.22345v1 Announce Type: new Abstract: Multimodal emotion recognition in conversations (MERC) aims to identify and understand the emotions expressed by speakers during utterance interaction from multiple modalities (e.g., text, audio, images, etc.). Existing studies have shown that GCN can improve the performance of MERC by modeling dependencies between speakers. However, existing methods usually use fixed parameters to process multimodal features for different emotion types, ignoring the dynamics of fusion between different modalities, which forces the model to balance performance between multiple emotion categories, thus limiting the model's performance on some specific emotions. To this end, we propose a dynamic fusion-aware graph convolutional neural network (DF-GCN) for robust recognition of multimodal emotion features in conversations. Specifically, DF-GCN integrates ordinary differential equations into graph convolutional networks (GCNs) to {capture} the dynamic nature of emotional dependencies within utterance interaction networks and leverages the prompts generated by the global information vector (GIV) of the utterance to guide the dynamic fusion of multimodal features. This allows our model to dynamically change parameters when processing each utterance feature, so that different network parameters can be equipped for different emotion categories in the inference stage, thereby achieving more flexible emotion classification and enhancing the generalization ability of the model. Comprehensive experiments conducted on two public multimodal conversational datasets {confirm} that the proposed DF-GCN model delivers superior performance, benefiting significantly from the dynamic fusion mechanism introduced.

As we navigate the first quarter of 2026, one development stands out above the rest in the AI Agency sector: Dynamic Fusion-Aware Graph Convolutional Neural Network for Multimodal Emotion Recognition in Conversations. This innovation is fundamentally altering how AI Agency leaders approach complex problem-solving.

Strategic Integration & ROI

The speed of iteration in Dynamic Fusion-Aware Graph Convolutional Neural Network for Multimodal Emotion Recognition in Conversations has surpassed even the most aggressive predictions. Organizations within AI Agency that integrated these workflows early are seeing significant reductions in operational latency and improved decision-making accuracy.

Market Advantage

Early adopters are capturing 22% more market share by automating high-frequency tasks associated with Dynamic Fusion-Aware Graph Convolutional Neural Network for Multimodal Emotion Recognition in Conversations.

Risk Mitigation

By using advanced agentic workflows, the errors typically associated with manual Dynamic Fusion-Aware Graph Convolutional Neural Network for Multimodal Emotion Recognition in Conversations management are reduced by up to 90%.

"Dynamic Fusion-Aware Graph Convolutional Neural Network for Multimodal Emotion Recognition in Conversations is not just a secondary feature for AI Agency; it is becoming the primary interface for industrial automation in the agentic era." - FNLogy Strategic Analysis

Looking ahead, the successful deployment of Dynamic Fusion-Aware Graph Convolutional Neural Network for Multimodal Emotion Recognition in Conversations within the AI Agency sector will likely differentiate market leaders from the rest of the pack. FNLogy remains at the forefront, helping brands navigate this complex transition.